this post was submitted on 25 Oct 2024

348 points (97.0% liked)

Curated Tumblr

3964 readers

790 users here now

For preserving the least toxic and most culturally relevant Tumblr heritage posts.

Image descriptions and plain text captions of written content are expected of all screenshots. Here are some image text extractors (I looked these up quick and will gladly take FOSS recommendations):

-web

-iOS

Please begin copied raw text posts (lacking a screenshot that makes it apparent it is from Tumblr) with:

# This has been reposted here to Lemmy as part of the "Curated Tumblr Project."

I made the icon using multiple creative commons svg resources, the banner is this.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

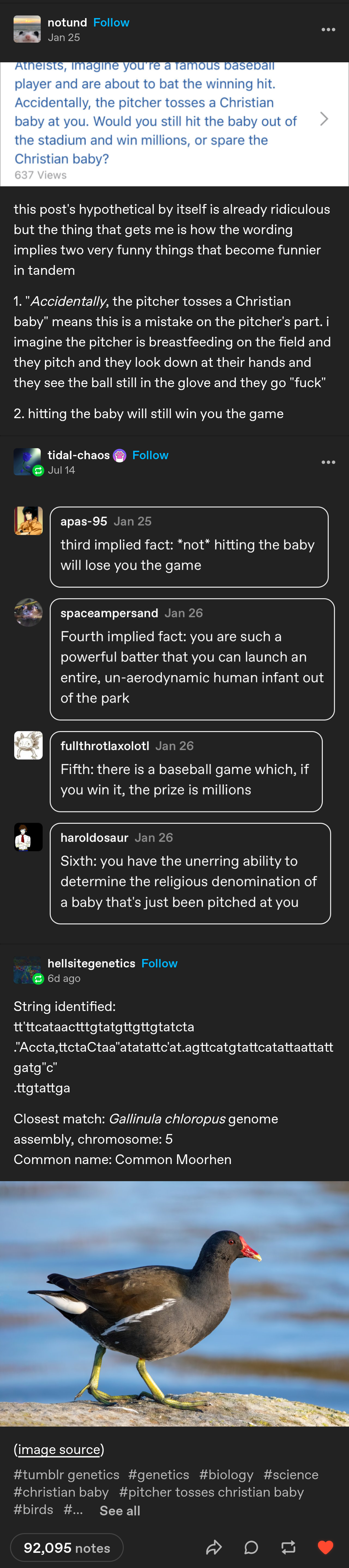

A bot strips away all spaces and letters that aren't A, T, C or G, then treats the rest like a genetic sequence and checks it against some database.

Presumably, it runs through many terabytes of data for each comment, as the Gallinula chloropus alone has about 51 billion base pairs, or some 15 GiB. The Genome Ark DB, which has sequences of two common moorhens, contains over 1 PiB. I wonder if a bored sequencing lab employee just wrote it to give their database and computing servers something to do when there is no task running.

No, I won't download the genome and check how close the "closest match" is but statistically, 93 base pairs are expected to recur every 2^186^ bits or once per 10^40^ PiB. By evaluating the function (4-1)^m^ × mℂ93 ≥ 4^93^ ÷ (pebi × 8), one can expect the 93-base sequence to appear at least once in a 1 PiB database if m ≥ 32 mismatches or over ⅓ are allowed. Not great.

This assumes true randomness, which is not true of naturally occuring DNA nor letters in English text, but should be in the right ballpark. Maybe fewer if you account for insertions/deletions.

The FAQ on the user's page says:

They are not a bot, just neurodivergent

They're using BLAST

ie, this

https://blast.ncbi.nlm.nih.gov/Blast.cgi

They did not code anything beyond a very simple regex function that strips down posts to a t c g, and then they copy paste it into the above website, then copy paste the output.

Hell, you can see they aren't even removing apostrophes and quotes, not even forcing it to all lower case or all upper case, removing spaces and line breaks...

... as a former database admin/dev/analyst, I was losing my fucking mind at the notion that someone with direct access to a genomics DB, would just hook it up to tumblr, via an automated bot, and spam the db with non work related requests, all on their own, when they can barely modify a string correctly.

Thank fucking god this is just using a publicly available, no doubt extremely low fidelity, watered down search via an API.

... You need literal, state of the art, absurdly expensive, power hungry, and secure supercomputers to be able to do genomic comparisons.

Probably one of the dumbest things you could do, quickest way to get fired, and then never be able to work in the field again, would be for a random genomics lab worker who does not know how to code to open up a whole bunch of security holes and cost god knows how much money (and damage if you write bad code) running frivolous bs searches in their state of the art genomics db... for a tumblr bot.

Not a bot, just neuro

Hilarious every time.

I mean, I am also autistic, so thanks for perpetuating the social stigma against neurodivergent people, I guess.

I thought it was funny. I'm a typical. Have had several relationships with neurodivergent people, including my wife.

I do find a lot of the quirks funny or cute. Was just giving my girl shit about the Princess and the Pea because she is extremely particular about her pillow situation. The pillows and stuffies have names. That shit is funny and it makes me grin when I have to help sort the pile.

Why do you find it offensive?

Well, your story about finding certain attributes about your wife is an entirely different context, and you didn't use the term as a pejorative.

The person I am responding to used the term as a pejorative, in reference to how a neurodivergent person could easily be confused with an automated bot.

This is inherently dehumanizing.

It's dismissive, it equates neurodivergent people to being sterile, non emotional beings who only exist to perform complex technical tasks.

This in and of itself is a common stereotype of certain kinds of people with certain kinds of neurodiversity, but neurodiverse actually refers to a much broader range of... different styles of cognitive function, different disorders, whatever you want to call them.

So, now on top of using the term as a pejorative, contextually perpetuating a specific dehumanizing stereotype... it also equivocates a diverse group of people into an oversimplified conglomerate, which in and of itself perpetuates other stereotypes by erroneously associating aspects that may (or may not) apply to a specific subset of neurodiverse people... to all of them.

I guess I see where you're coming from. Labels can hit different, especially when the label doesn't fit all the recipients. Being labeled can cause offense. Especially if it's derogatory. I don't think it was meant to be derogatory by op, but it certainly wasn't very sensitive.

The difficult part is that it's a spectrum. Especially when it comes to level of function. Profound autism is a totally different animal from high functioning people. And there is a whole spectrum of differences in how the divergency manifests between individuals.

Savantism and savant-like actions are fascinating to a lot of typicals, myself included. That level of focus and ability to make the connections or internally churn the information is not an accessible state for most of us. It's like seeing real magic.

(Obviously, not all neurodivergent folks have savant-like behaviors, most likely just a minority. No idea of the prevalence.)

So, a neurodivergent person inputting letters scraped from Tumblr posts into a genome search engine is funny as hell because it's such a strange thing to do and produces an interesting result. Why would someone do that? Why would you even think to do it in the first place?

My wife does absolutely hilarious shit all the time. Our house is full of laughter. She's wickedly sarcastic and full of black humor.

So, given that I think some of the behaviors are awesome while being hysterically funny, what is an inoffensive way to engage in humor about neurodivergent folks, in your opinion? Are there any preferred terms that are shorthand for: "Autistic person pulled some fucked up logic trick or other stunt"?

I realize you expand on this in the rest of your response... but if you had only said this...

Imagine saying that to a black man in the 60s in the south who just got called 'boy'.

Imagine saying this to Chinese person in the 40s who just got called a 'Jap' or a 'Nip'.

Imagine saying this to a person with Downs Syndrome in the 90s who just got called 'a retard'.

... When people, who have unalterable traits, tell you that they do not appreciate being stereotyped, having certain words used to describe them or people like them, or erroneously lumped in as the same as them, in certain contexts and ways... the decent thing to do is just listen to them and not demand an explanation why they find such things offensive.

Anyway, I believe you when say that you have had relationships with neurodiverse people, that you truly love your wife, that her quirks are a source of joy for you.

I do not mean to be offensive, but you describe neurodiverse people in a... typical way that a genuinely well intentioned neurotypical person who has actually gone out of their way to learn about and personally knows neurodiverse people would.

... I am apparently quite an oddity in that I am a high functioning autistic person. I don't like to use the term 'savant' because it connotes that I am some kind of super genius. I'm not a super genius.

I have two college degrees, I consider myself more intelligent than others in many ways, but absolutely less intelligent or capable in others.

As an example of the latter... there is basically no way I could have this exchange with you in person, over the phone or video conference.

I would get too flustered and trip over my words. I would interject when I believe you are pausing to allow me to speak, but in actuality you were not expecting that and would find my interjection rude.

EDIT: To further this point, I think I've spent 2 or 3 hours now, writing and rewriting almost all of this post.

I would also make connections between topics and concepts that most people think are totally unrelated non sequiturs which make no sense, although you have stated that you find such connections to be 'like seeing real magic'.

I cannot tell you the number of times I've been brushed off as a babbling loon by people who lack the patience to allow me to finish explaining the connections that occur to me, who lack the knowledge to even understand many of the concepts I connect together.

It is extremely frustrating.

In my life, its roughly a 20:1 ratio of people that just think I am babbling, to people who actually contemplate seriously what I am saying, and often respond with something akin to... 'wow. I never thought of that in that way, but that makes a lot of sense!'

My perspective on this is:

Other than inherent incongruity of the abrupt topic shift to from discussing the original image and its absurd visual metaphors... to 'suddenly, genomic sequence of bird!' being odd, out of place...

Sure, its uncommon, novel, to read the genomic post.

But why would you even ask why someone would think to do that?

That's just a thing they enjoy doing. Its a hobby.

Why do people learn to unicycle? Garden? Drive a motorcycle? Ride a horse? Build sandcastles? Learn to dance? Build minifigs? Collect fucking funko pops?

People just enjoy doing things. Sure, some are more niche and rare than others... but why is there even a question as to why someone has some specific hobby as opposed to another?

Why does an uncommon hobby warrant explanation?

How can there be an explanation beyond 'I find it entertaining or fulfilling or enjoyable?'

It would be one thing if some uncommon hobby seemed likely to engender physical or financial or mental harm to the hobbyist or other... but making a unique style of very matter of fact Tumblr posts doesn't cause any harm, and they even wrote an FAQ explaining this, which ... all you have to do is click on their name to understand what this person's deal is...

But me, apparently (?) the only other neurodiverse person in this thread, took that basic step... while all the neurotypicals preferred to just invent their own explanations, come to their own conclusions or commentary based off of hunches and intuition, without doing even a cursory investigation to determine if their ideas had any real basis in fact.

Well... don't use pejoratives? Don't use labels when they don't need to be used, when they aren't especially necessary? Address people by their names? Don't present then as useless invalids, or emotionless robots?

Maybe present ... constructive compare and contrast scenarios, where a neurotypical picks up on something an ND wouldn't, and the the reverse happens?

Like... I laugh when why wife does X... but she laughs when I do Y... and when she explains why she finds Y funny to me, I come to humourous realization Z1 about her... and humorous realization Z2 about myself.

??? I dunno, I don't know how to write a comedy set, I generally do not socialize much IRL.

Day late. Was totally wiped yesterday and took a down day with the girl on the couch.

I wouldn't quite say I'm demanding an explanation. I would hope you see it as having a discussion with a typical who is genuinely trying to understand and is willing to modify behaviors if they are problematic. I was raised in profound ignorance by religious fundamentalists in the deep south. Sprinkle in racism and pretty much all the other "isms". I've worked very hard to make my way out that ignorance. Asking lots of questions has served me well. I feel like that open questioning and willingness to try and learn is part of the reason I tend to get along so well with people on the spectrum as well as making my way out.

I also have a whole heap of monkey curiosity. Figure it's a good thing. Want to know and learn about other's cultures, experiences, and mental states. In no way am I trying to be aversive in this conversation, you just seemed to be willing to talk and I'm satisfying that curiosity. I like different people. Please don't feel like I'm demanding your time or attention. If you need to tell me to fuck off, that's cool, won't hurt my feelings. If you need to space out your responses, I don't need an immediate reply, take as long as you want.

Yup. Glad you see it that way. My wife and I are heavily involved in the BDSM community and are poly. I don't know what it is, but you can't throw a stick without hitting a neurodiverse person within our subculture. Neurodiverse individuals are far more common than in the general population. At a guess, 25%; certainly greater than 10% but less than half. It is common for neurodiverse folks to be in positions of leadership or longstanding respected members of the community. Another commonality among neurodiverse folks that I've noticed is that they are far more likely to be bi or some sort of trans.

I've actually known quite a few high functioning autistic people. Wife is some sort of neurodiverse, our former partner was high functioning autistic. Anyhow, savant or savant-like behaviors. Yeah, it's not a great term. But, you know what I mean. It's totally a thing. As a typical, I'm unable to make those leaps and do find it endlessly fascinating. Y'all's brains are working on a different wavelength, and aren't even on the same wavelength as each other. In this instance, we are looking at someone who is sorting pseudo random text into genetic code and then finding a genetic match. That's a wonderfully weird thing to do. My questions about 'Why' would they do that are rhetorical. Those behaviors, even as a hobby, would never occur to me in a million years.

I suppose that's what I'm really trying to ask. You'll see the term 'autist' used as an explanation, 'neuro' in this case. You, and other autistic people, can find this offensive, and rightfully so. Is there an inoffensive term for these behaviors?

In private playful conversations with my wife, it's not uncommon for me to call her a "fucking autist" or call her actions 'autistic.' This might also occur among close friends. It's absolutely a slur. I would never, ever, do so publicly. Note that she calls me 'old man', calls me 'bald' or makes fun of my baldness, makes fun of my ileostomy, makes fun of my accent and so on. We use slurs, in play, for each other all the time. Publicly, it's very common that I'm the butt of the group jokes because it's obvious that it doesn't bother me and I'm an easy and willing target for that sort of humor. (Average height/weight cis-het appearing, bald, white guy with a great beard/moustache and a southern accent.) I have a gruff demeanor so it's a lot of fun for people to poke at me, especially neurodiverse folks, as they know that I'm a safe target by example from my wife and friends. (Think Jaimie from Mythbusters.)

That's a whole other thing that happens to me with the neurodiverse. The pure fucking joy they get from playfully picking on me is something else. It's apparently quite a thrill. Very timid about calling me bald, old, or whatever at first. My wife or friends are usually the ringleaders. I guess it feels subversive or something? I just growl, grumble or frown back with the very timid.

My wife and I have a lot of back-and-forth with this sort of thing. Our relationship is very kinky and this is how we flirt. She initiates by picking on me, gets in 'trouble', I put her in her place or give her a swat and a kiss. I initiate by picking on her or giving her a swat, she pouts about how I don't love her or how mean I am, and I kiss her better. She wants that tingle of fear and then the comfort. Note, we've never had a real raised voice argument. We communicate very well. Real relationship issues are handled in an adult manner through discussion including cooling off, if needed. This is our relationship and communication style, it has grown organically between us and isn't a one-size-fits-all.

With the other neurodiverse folks I've been close to, including our former autistic partner, I've basically found that we create almost our own dialect between us. I feel like, as a typical, that I have some gifts in being able to communicate and modify my communication style to theirs. I enjoy it and don't mind stretching myself to their preferred communication style and level of comfort.

So, there are hurtful slurs that describe a common behavior among neurodiverse folks. When trying to be inoffensive, I've called it savant or savant-like behavior. It's the sort of behavior that a typical would almost certainly never engage in. As a typical, when it's pointed out that the person that engages in the behavior is neurodiverse, it's an 'aha' moment often mixed with humor. It would be nice if it had an inoffensive label.

Yes, with you, I do see it that way, because you have very much indicated a genuine desire to understand and learn.

That is why I prefaced that section with "If you had only said this, my response would be blah blah."

It was a hypothetical. I felt it was worth writing out because a lot of people do the 'why are you so offended?' as a careless, uninquisitive attempt to paint the offended person as basically unreasonable and hysterical.

I believe you, appreciate you saying it, and have the same sentiment toward you. =)

My theory on this is basically:

neurodiverse people tend to basically just inherently give less of a shit about existing predominant social and lifestyle norms, as we tend to view them as just then currently existing 'rules' of society, which have objectively changed and shifted over time and place... basically we are more likely to see many social rules as arbitrary, very often justified by nonsense.

we are more likely to be shunned and misunderstood by society in general, so we are more likely to gravitate toward some kind of smaller, accepting, 'found family' type of social environment.

I do know what you mean, I understand.

I am just very, very used to people in my life treating me in an absolute, binary way.

Either I am a genius who is just expected to solve absurdly complex theoretical problems for them, which takes hours and hours of time, and this is just expected of me, without any compensation or recognition or thanks, whatsoever...

Or, I get 'Sir this is a Wendy's', laughed at and mocked when I do it of my own accord and they entirely don't fucking care whatsoever.

A whole lot of people use a rhetorical question as basically a derisive, sarcastic insult.

But not all people. Some people ask what others view as rhetorical questions as literal, actual questions. They actually want an answer.

And some people use it as an equivalent of a completely different statement or emotional expression.

In your case, when you ask 'why would someone do this?' what you actually mean is 'wow, that's so wild, I would never think anyone would do this as a hobby!'.

But many others use 'why would you do that?' to mainly indicate anger or shock... instead of just... saying that they are angry and shocked. In that case, basically there is no acceptable, meaningful response, (beyond emotionally sympathizing with the rhetorical question asker) despite it being phrased as a question, which prompts a response.

Sometimes some people do all of these things, at different times, and some people people will start off with one intention and then in the same conversation flip it around to other intentions.

This is outstandingly confusing to me, because almost everyone has a different way they will tell you that languages and phrases like this work when they and other people use them, but they all insist their way they is the way everyone acts.

The reality is that everyone sends and reads context and intonation clues differently to specify the actual intent behind a rhetorical question... but basically all neurotypicals act like the way they do it is the objective universal standard.

This has actually been studied and is not me just making up an 'opinion' here.

Neurotypicals, on average, misinterpret roughly 50% of ambiguous social cues during conversation, but generally estimate that they only miss around 10%.

Neurodivergents are much more likely to ask for clarity when they reach a social cue they realize is ambiguous, and are likely to get a rude, dismissive, angry... some kind of negative response for doing so, from a neurotypical.

There absolutely are behavior patterns that are objectively, correctly associated with certain 'diagnoses' of specific kinds of neurodivergence.

With 'neuro' as a blanket term, you run into the whole problem of conflating a particular diagnosis's patterns with each other, as I've already outlined.

But anyway....as with other terms that can be, but are not always used as slurs... intent, context, manner of usage, who is using it... all that stuff matters as well.

"The autist has an esoteric, information/analysis centric hobby, that's so hilarious, of course he does, hahah!"

... is different than

"Oh, the guy with the information/analysis centric hobby is autistic. Well, that makes sense."

The former is laughing at someone for... doing what would be completely expected for that person to do. It is mockery.

The latter is just noting that a completely reasonable explanation is reasonable.

Its just stereotype insult comedy, the bottom of the barrel, cheapest way to get a laugh out of an audience that doesn't mainly consist of the demographic you're insulting, but is only aware of stereotypes.

... If you told a joke that was maybe more involved, maybe played off of how a compounding series of autistic behavior patterns combined in some way to lead to an absurd problem, or maybe cancel each other out in a non obvious way... that would probably be more likely to not be viewed as just an insult, as relatable, funny.

If you're just looking to swap out a word with another word that has exactly the same meaning ... then nothing changes.

You're now just saying "thought it was a bot, turns out its a skrimbloob. hilarious every time!"

Still has the same intent and meaning.

I often use the term Autist to describe myself when I realize I've misread a social cue or am doing waaay more investigation and analysis into a topic than most people would do.

I'm not insulting myself.

I'm just stating it as an accurate descriptor.

Its fine to use the term if you are not using it to infantilize, to dismiss, to deride, to mock someone or something simply because they or the behavior is are autistic.

I am not you or your wife, but I would find this offensive and toxic, on both sides.

I really don't like relationships where a normal component of them is insults.

But apparently for yourself and your wife, other people you are close with, this is normal in private, and you ... know it is offensive to do so in a public setting where people may overhear it.

... I know a lot of people have relationships where 'playful' insults are normalized to the point where its 75% or more of the spoken exchanges.

I have never understood this, and I don't like being in such situations.

An occasional playful jab is one thing, but I've been in waaaay too many romantic and platonic relationships where it occurs basically all the time.

But if it works for you and her, great, I'm not gonna say its objectively bad, just that I wouldn't enjoy that kind of dynamic at all.

Again, I don't get this.

I would think those neurodiverse people are being needlessly cruel.

As an Autist, I don't see why it would be mixed with humor.

Why did the person go to the party?

Because they're more extraverted than introverted.

Ha... ha?

Why did the other person bring so many cooking ingredients to that party?

Because they're a trained chef.

Haha wow that's ... so... unexpected? .. ???

Just a brief note. Today and this evening are going to be very busy for me. I don't have time to give your post the response it deserves and will do so later. Probably tomorrow.

No problem! I appreciate that you care to write an in depth response. Hope all your activities go well =D

Wow. Just wow. Someone is still using CGI.

Wayback Machine's earliest capture is from 2008.

It's a cutesy, public facing, extremely limited and low fidelity 'demo version' of a genomic search, basically made as a PR / Science Education promotion gimmick... by government contracted web/backend devs, in 2008.

Honestly its a miracle its still functional at all.

That's hilarious, but I needed the explanation too. Thanks!

The genomes have likely been indexed to make finding results faster. Google doesn't search the entire internet when you make a query :P

I know that similar computational problems use indexing and vector-space representation but how would you build an index of TiBs of almost-random data that makes it faster to find the strictly closest match of an arbitrarily long sequence? I can think of some heuristics, such as bitmapping every occurrence of any 8-pair sequence across each kibibit in the list. A query search would then add the bitmaps of all 8-pair sequences within the query including ones with up to 2 errors, and using the resulting map to find "hotspots" to be checked with brute force. This will decrease the computation and storage access per query but drastically increase the storage size, which is already hard to manage.

However, efficient fuzzy string matching in giant datasets is an interesting problem that computer scientists must have encountered before. Can you find a good paper that works well with random, non-delimited data instead of just using the approach of word-based indices for human languages like Lucene and OpenFTS?

As per my other post, this person isn't doing any of that.

But, since you asked for papers on generic matching algorithms, I found this during the silent conniption fit you sent me into after suggesting that some random tumblr user plugged a tumblr bot directly into a state of the art genomics db.

https://link.springer.com/article/10.1007/s11227-022-04673-3

Please note that while, yes, they ran this test on a standard office computer, they were only searching against 12 million characters.

A single tebibyte of characters would be more like 1 trillion characters. A pebibyte would be more like 1 ~~quintillion~~ quadrillion.

... much, much, much longer processing times.

Edit: Used the wrong word for stupendously large numbers that start with q.

Yeah good point, not a trivial undertaking. I'm not an expert in that area but maybe elasticsearch or similar technology is able to find matches. Although I have no idea how that works under the hood

It’s probably just ncbi