Snapshots are the first line of defense for recovery from software errors. For hardware use ZFS raid.

That still isn't a proper backup. Have a separate backup that can not easily be destroyed.

A place to share alternatives to popular online services that can be self-hosted without giving up privacy or locking you into a service you don't control.

Rules:

Be civil: we're here to support and learn from one another. Insults won't be tolerated. Flame wars are frowned upon.

No spam posting.

Posts have to be centered around self-hosting. There are other communities for discussing hardware or home computing. If it's not obvious why your post topic revolves around selfhosting, please include details to make it clear.

Don't duplicate the full text of your blog or github here. Just post the link for folks to click.

Submission headline should match the article title (don’t cherry-pick information from the title to fit your agenda).

No trolling.

Resources:

Any issues on the community? Report it using the report flag.

Questions? DM the mods!

Snapshots are the first line of defense for recovery from software errors. For hardware use ZFS raid.

That still isn't a proper backup. Have a separate backup that can not easily be destroyed.

¯\_(ツ)_/¯ Yeah. It is kinda hard.

Backups. First and foremost.

Now once that is sorted, what if your DB gets corrupted. You test your backups

Learn how to verify and restore

It is a hassle. That’s why there is a constant back and forth between on prem and cloud in the enterprise

My setup is pretty safe. Every day it copies the root file system to its RAID. It copies them into folders named after the day of the week, so I always have 7 days of root fs backups. From there, I manually backup the RAID to a PC at my parents’ house every few days. This is started from the remote PC so that if any sort of malware infects my server, it can’t infect the backups.

Off-site backups that are still local is brilliant.

Pretty solid backup strategy :) I like it.

A main 'server' (an HP Prodesk 600 G3 MT) and a Z440 Workstation as a 'heavy load' machine. Everything on arch testing. The most unstable thing there is, theoretically, and yet it didn't fail me once. The security comes from me, sitting right next to it with spare servers and multiple backup locations. But I plan on a Proxmox cluster, preferably for both kinds of servers.

Acronyms, initialisms, abbreviations, contractions, and other phrases which expand to something larger, that I've seen in this thread:

| Fewer Letters | More Letters |

|---|---|

| DNS | Domain Name Service/System |

| Git | Popular version control system, primarily for code |

| HA | Home Assistant automation software |

| ~ | High Availability |

| HTTP | Hypertext Transfer Protocol, the Web |

| IP | Internet Protocol |

| LVM | (Linux) Logical Volume Manager for filesystem mapping |

| LXC | Linux Containers |

| NAS | Network-Attached Storage |

| PSU | Power Supply Unit |

| Plex | Brand of media server package |

| RAID | Redundant Array of Independent Disks for mass storage |

| RPi | Raspberry Pi brand of SBC |

| SBC | Single-Board Computer |

| SSH | Secure Shell for remote terminal access |

| VPS | Virtual Private Server (opposed to shared hosting) |

| ZFS | Solaris/Linux filesystem focusing on data integrity |

| nginx | Popular HTTP server |

15 acronyms in this thread; the most compressed thread commented on today has 3 acronyms.

[Thread #821 for this sub, first seen 21st Jun 2024, 17:05] [FAQ] [Full list] [Contact] [Source code]

I got tired of having to learn new things. The latest was a reverse proxy that I didn't want to configure and maintain. I decided that life is short and just use samba to serve media as files. One lighttpd server for my favourite movies so I can watch them from anywhere. The rest I moved to free online services or apps that sync across mobile and desktop.

Unfortunately, I feel the same. As I observed from the commenters here, self-hosting that won't break seems very expensive and laborious.

Reverse proxy is actually super easy with nginx. I have an nginx server at the front of my server doing the reverse proxy and an Apache server hosting some of those applications being proxied.

Basically 3 main steps:

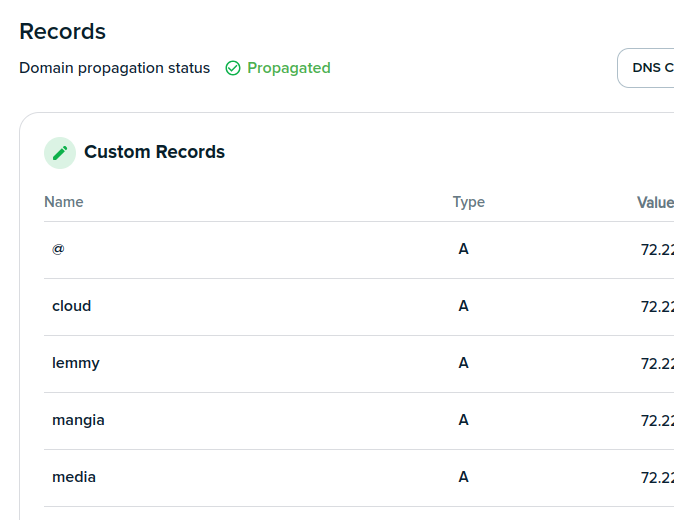

Setup up the DNS with your hoster for each subdomain.

Setup your router to port forward for each port.

Setup nginx to do the proxy from each subdomain to each port.

DreamHost let's me manage all the records I want. I point them to the same IP as my server:

This is my config file:

server {

listen 80;

listen [::]:80;

server_name photos.my_website_domain.net;

location / {

proxy_pass http://127.0.0.1:2342;

include proxy_params;

}

}

server {

listen 80;

listen [::]:80;

server_name media.my_website_domain.net;

location / {

proxy_pass http://127.0.0.1:8096;

include proxy_params;

}

}

And then I have dockers running on those ports.

root@website:~$ sudo docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

e18157d11eda photoprism/photoprism:latest "/scripts/entrypoint…" 4 weeks ago Up 4 weeks 0.0.0.0:2342->2342/tcp, :::2342->2342/tcp, 2442-2443/tcp photoprism-photoprism-1

b44e8a6fbc01 mariadb:11 "docker-entrypoint.s…" 4 weeks ago Up 4 weeks 3306/tcp photoprism-mariadb-1

So if you go to photos.my_website_domain.net that will navigate the user to my_website_domain.net first. My nginx server will kick in and see you want the 'photos' path, and reroute you to basically http://my_website_domain.net:2342. My PhotoPrism server. So you could do http://my_website_domain.net:2342 or http://photos.my_website_domain.net. Either one works. The reverse proxy does the shortcut.

Hope that helps!

fuck nginx and fuck its configuration file with an aids ridden spoon, it’s everything but easy if you want anything other than the default config for the app you want to serve

I only use it for reverse proxies. I still find Apache easier for web serving, but terrible for setting up reverse proxies. So I use the advantages of each one.