this post was submitted on 05 Oct 2024

942 points (97.8% liked)

Science Memes

11161 readers

2574 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- !abiogenesis@mander.xyz

- !animal-behavior@mander.xyz

- !anthropology@mander.xyz

- !arachnology@mander.xyz

- !balconygardening@slrpnk.net

- !biodiversity@mander.xyz

- !biology@mander.xyz

- !biophysics@mander.xyz

- !botany@mander.xyz

- !ecology@mander.xyz

- !entomology@mander.xyz

- !fermentation@mander.xyz

- !herpetology@mander.xyz

- !houseplants@mander.xyz

- !medicine@mander.xyz

- !microscopy@mander.xyz

- !mycology@mander.xyz

- !nudibranchs@mander.xyz

- !nutrition@mander.xyz

- !palaeoecology@mander.xyz

- !palaeontology@mander.xyz

- !photosynthesis@mander.xyz

- !plantid@mander.xyz

- !plants@mander.xyz

- !reptiles and amphibians@mander.xyz

Physical Sciences

- !astronomy@mander.xyz

- !chemistry@mander.xyz

- !earthscience@mander.xyz

- !geography@mander.xyz

- !geospatial@mander.xyz

- !nuclear@mander.xyz

- !physics@mander.xyz

- !quantum-computing@mander.xyz

- !spectroscopy@mander.xyz

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and sports-science@mander.xyz

- !gardening@mander.xyz

- !self sufficiency@mander.xyz

- !soilscience@slrpnk.net

- !terrariums@mander.xyz

- !timelapse@mander.xyz

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

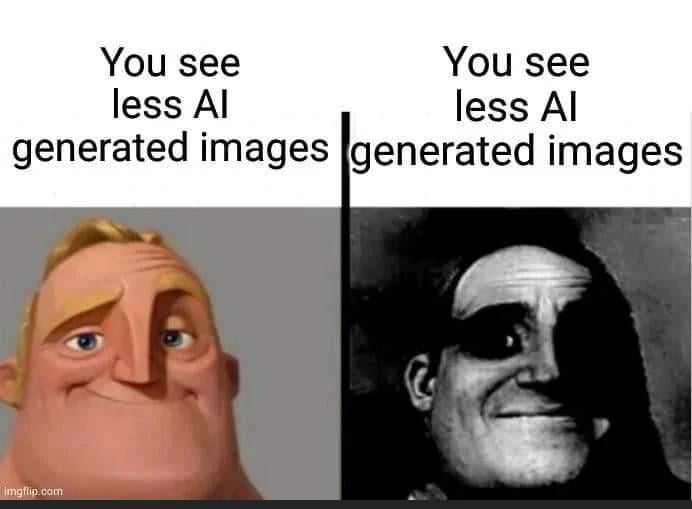

Alt text: a beautiful girl on a dock at sunset with some fugly hands and broken ass fingees

Honestly, auto generating text descriptions for visually impaired people is probably one of the few potential good uses for LLM + CLIP. Being able to have a brief but accurate description without relying on some jackass to have written it is a bonefied good thing. It isn't even eliminating anyone's job since the jackass doesn't always do it in the first place.

I am so sorry, and i agree with your point, but i really had a good laugh at my mental image of a bonefied good thing :-)

If you know already or it's autocorrect, just ignore me, if not, it's bona fide :-)

The models that do that now are very capable but aren't tuned properly IMO. They are overly flowery and sickly positive even when describing something plain. Prompting them to be more succinct only has them cut themselves off and leave out important things. But I can totally see that improving soon.

Unfortunately the models are have trained on biased data.

I've run some of my own photos through various "lens" style description generators as an experiment and knowing the full context of the image makes the generated description more hilarious.

Sometimes the model tries to extrapolate context, for example it will randomly decide to describe an older woman as a "mother" if there is also a child in the photo. Even if a human eye could tell you from context it's more likely a teacher and a student, but there's a lot a human can do that a bot can't, including having common sense to use appropriate language when describing people.

Image descriptions will always be flawed because the focus of the image is always filtered through the description writer. It's impossible to remove all bias. For example, because of who I am as a person, it would never occur to me to even look at someone's eyes in a portrait, let alone write what colour they are in the image description. But for someone else, eyes may be super important to them, they always notice eyes, even subconsciously, so they make sure to note the eyes in their description.